For the past two decades, the API was king.

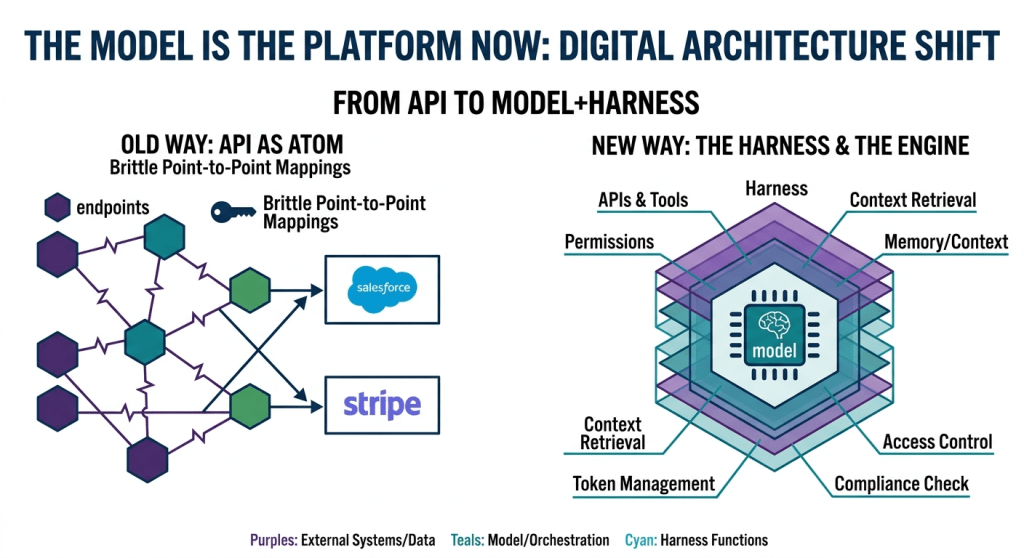

If you wanted your software to talk to Salesforce, you called their API. If you wanted to process a payment, you called Stripe’s. The entire digital economy was built on this architecture: discrete systems, locked behind endpoints, exchanging structured data in formats only engineers could love. The API became the atom of business logic — and the companies who owned the best APIs owned the most leverage.

That era is ending.

Not because APIs are disappearing, but because something more powerful has arrived to sit on top of them: the model. And with it, a new layer called the harness — the operating environment that equips a model with tools, memory, permissions, and guardrails, turning raw intelligence into purposeful, governed action.

Together, models and harnesses are not just a new technology stack. They represent a fundamental shift in how digital systems are conceived, built, and operated. And most organizations are still building as if the old rules apply.

This post is about the four ways those rules have changed — and what it means for the systems you’re building today.

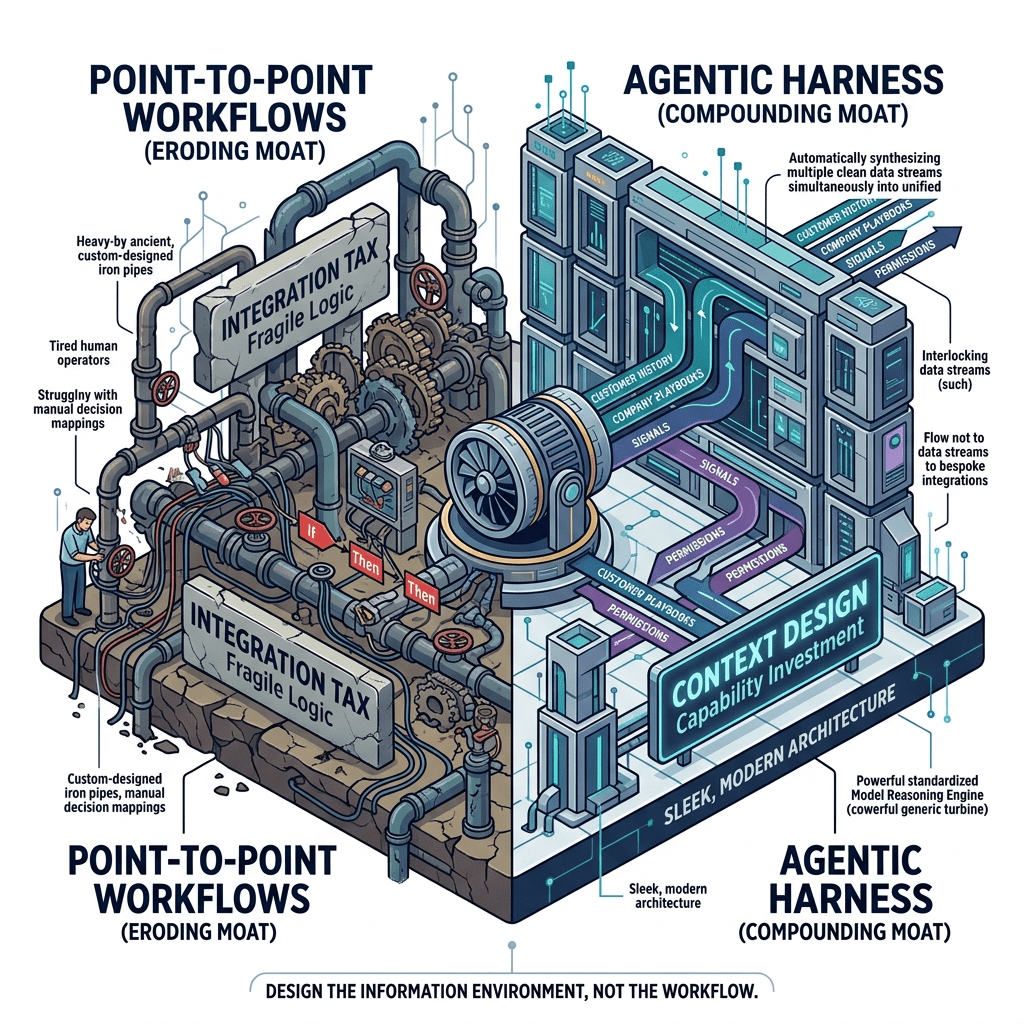

Shift 1: From Point-to-Point Integration to Model-Mediated Integration

The old way: Two systems needed to share data or trigger actions? You built an integration. That meant agreeing on a data format, writing transformation logic, handling errors, maintaining the mapping as both systems evolved, and doing it all over again every time a new system entered the picture. A mid-sized enterprise might have hundreds of such integrations — a web of brittle connections that became progressively harder to maintain as the system landscape grew. The joke in enterprise IT was that the integration layer cost more to operate than the systems it connected.

The new way: A model doesn’t need a bespoke integration for every pair of systems. It can call a CRM’s API, an ERP’s API, and a calendar’s API — understand the data from all three in natural language — and synthesize a coherent output without a single hand-coded transformation layer in between. The model is the integrator.

This is not a small change. Integration complexity has been one of the primary drivers of enterprise software cost and rigidity for decades. The lock-in that Salesforce, Workday, and ServiceNow enjoyed was not just about their features — it was about the gravitational pull of the integration investments made around them. Switch your CRM and you don’t just replace the CRM; you re-wire everything connected to it.

Model-mediated integration dissolves much of that lock-in. If your harness abstracts over the underlying systems, switching a vendor becomes a configuration change rather than a rewiring project. The pipes matter less when the model can reason over any pipe.

What this means for how you build: Stop investing in point-to-point integrations as a long-term architecture. Every new system you add to your landscape should be evaluated on the quality of its API surface — not on the richness of its native integrations. Clean, well-documented APIs with consistent authentication patterns are now a first-class requirement when selecting software. The integration tax is being eliminated, but only for organizations that structure their procurement and architecture accordingly.

Shift 2: From Deterministic Workflows to Agentic Reasoning

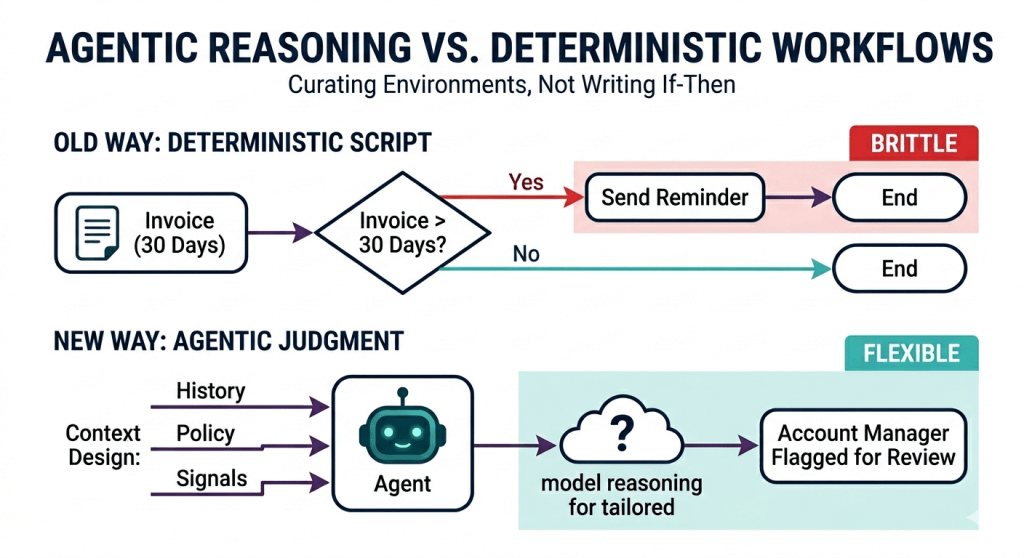

The old way: Business logic was encoded as deterministic workflows. If a customer’s invoice goes unpaid for 30 days, send a reminder. If a support ticket is tagged “urgent,” route it to Tier 2. If inventory drops below threshold, trigger a reorder. These workflows were explicit, predictable, and auditable — and they were brittle. Every edge case required a new branch in the decision tree. Complex processes required complex orchestration engines. And the moment the business changed, the workflows had to be manually updated.

The deeper problem was that deterministic workflows could only handle what their designers anticipated. The real world is full of situations that fall outside the decision tree — and those situations either got ignored, escalated to humans, or produced wrong outcomes silently.

The new way: A model can reason about a situation and decide what to do without being given an explicit script. It can look at an unpaid invoice, check the customer’s relationship history, note that they’re mid-renewal negotiation, and decide that an automated reminder is probably not the right move — flagging it to the account manager instead. That judgment call doesn’t require a new workflow branch. It requires a model with the right context and the right tools.

This is what “agentic” means in practice: systems that don’t just execute predefined steps, but reason about what steps are appropriate given the current situation. Agents can break down complex goals, sequence actions, handle exceptions, and recover from failures — all without a human designing the decision tree in advance.

What this means for how you build: The design question shifts from “what is the correct workflow?” to “what context does the model need to make good decisions?” This is a fundamentally different engineering discipline. You’re no longer writing if-then logic; you’re curating information environments. The model needs access to the right data at the right time — customer context, company policy, historical patterns, current constraints. Engineering effort moves upstream, into context design and data architecture, rather than downstream into workflow orchestration.

It also means rethinking how you handle accountability. Deterministic workflows are auditable by design. Agentic systems require explicit logging, explainability layers, and governance frameworks — so that when a model makes a decision, you can understand why and correct the underlying context if it was wrong.

Shift 3: From Human-First UX to Agent-First Design

The old way: Every enterprise application was designed for a human to operate. Screens, menus, dashboards, forms — the entire interface paradigm assumed that a person would sit down, look at information, make a decision, and click something. Even workflow automation was conceived as a way to reduce the number of clicks, not to eliminate the human from the loop entirely.

As a result, the underlying systems were designed around human cognitive patterns. Data was formatted for readability. Processes were structured to guide users step by step. Error messages were written for people. The API, when it existed at all, was often an afterthought — a way for developers to access functionality that was primarily designed for the UI.

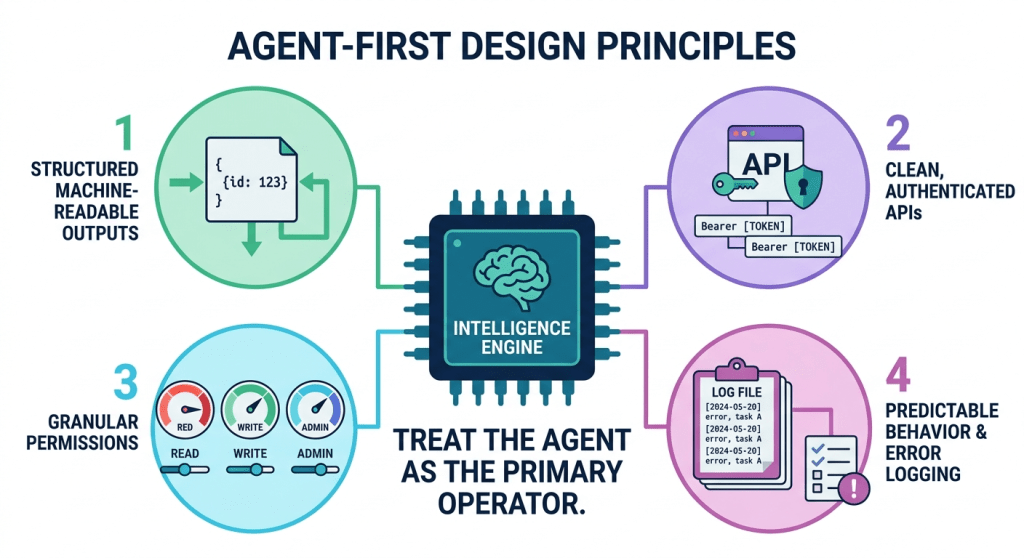

The new way: Increasingly, the primary operator of your systems is not a human — it’s a model. An agent scheduling meetings, processing invoices, triaging support tickets, or updating records doesn’t need a screen. It needs a clean API, structured outputs, clear permission boundaries, and predictable behavior under edge cases.

Systems that were designed human-first are often hostile to agents. They have inconsistent APIs, undocumented behaviors, UI-only workflows with no programmatic equivalent, and authentication flows that assume a human is present to enter a password. Agents trying to operate these systems spend enormous effort compensating for interface friction that was never designed with them in mind.

What this means for how you build: Agent-readiness is now a design criterion, not an afterthought. When building or procuring systems, ask: can a model operate this without a human in the loop? Does it have a clean, authenticated, well-documented API? Are outputs structured and machine-parseable? Are permission models granular enough to give an agent exactly the access it needs — no more, no less?

This doesn’t mean removing humans from important decisions. It means being intentional about where human judgment adds value — and designing those handoff points explicitly — rather than having humans involved everywhere by default because the system was never designed for any other option. The interface is increasingly a fallback, not the primary path.

Shift 4: From Monolithic Data Silos to Context-Rich Harnesses

The old way: Data was organized around systems, not around decisions. Your customer data lived in the CRM. Your financial data lived in the ERP. Your communications lived in email and Slack. Each system had its own data model, its own access controls, its own query patterns. Getting a complete picture of anything — a customer, a project, a decision — meant pulling from multiple systems, transforming and reconciling the data, and hoping the result was coherent.

Data warehouses and BI tools tried to solve this by centralizing everything into a single analytical layer. But that layer was read-only, batch-updated, and always somewhat stale. And it was still organized for human analysts running queries, not for models making real-time decisions.

The new way: The harness is the new data layer. When a model needs context to act — who is this customer, what have we promised them, what are our policies, what is the current state of this account — the harness retrieves the right information from the right systems at the right time and presents it as coherent context. The model doesn’t need a data warehouse. It needs a well-designed retrieval layer that knows where to look.

This changes the economics and architecture of data. The question is no longer “how do we centralize all our data?” but “how do we make the right data accessible to models at runtime?” That includes structured data from databases, unstructured data from documents and communications, and institutional knowledge from policy documents, playbooks, and past decisions.

Organisation that have invested in clean, well-governed, accessible data will find this transition easier. Organizations with fragmented, siloed, or poorly documented data will find that their AI initiatives underperform — not because of the models, but because the harnesses can’t be fed.

What this means for how you build: Data governance is no longer a compliance exercise — it’s a capability investment. Every dataset you clean up, every API you document, every policy you write down and make searchable becomes a direct input into the quality of your harnesses. The organisation building the most powerful AI-enabled operations are not necessarily the ones with the best models. They are the ones with the deepest, best-organized context.

The Power Shift: Who This Favors

These four shifts don’t affect all players equally.

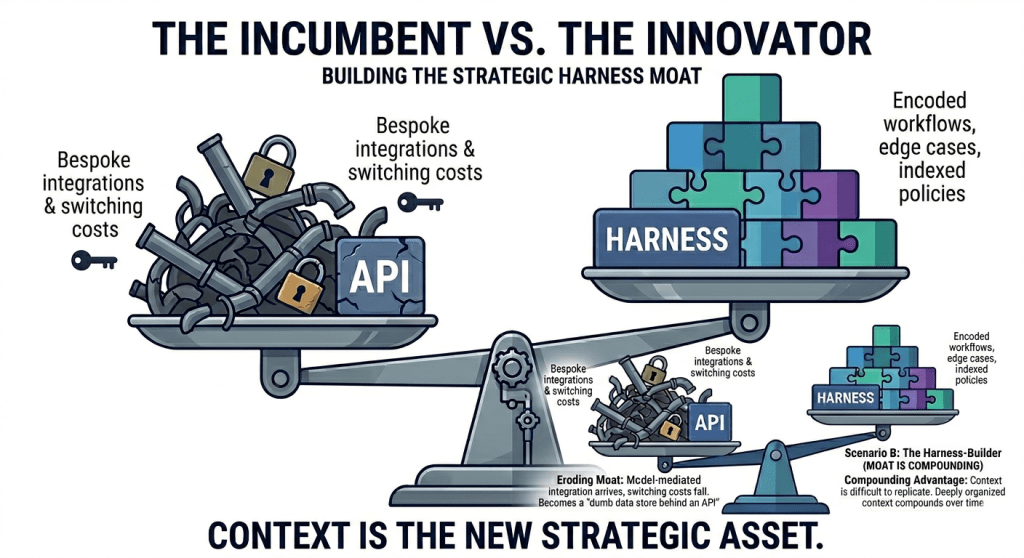

Incumbent software vendors who built their moats around integration complexity are exposed. If point-to-point integrations become less necessary, the switching costs that kept customers locked in erode. Vendors who respond by building excellent AI layers on top of their data advantages will survive. Those who don’t will find their platforms increasingly treated as dumb data stores behind a clean API.

Organizations with proprietary data and institutional context gain significant leverage. The harness that encodes deep knowledge of your customers, your operations, and your decision-making patterns is extraordinarily hard for a competitor to replicate. Data and context become the new source of durable competitive advantage — more durable than features, more durable than workflow automation.

Model providers sit at a newly critical chokepoint. As models become load-bearing infrastructure for enterprise operations, dependency on model providers becomes a strategic risk that boards should be actively managing — through diversification, contractual protections, and investment in harness portability.

Early harness-builders will accumulate compounding advantages. Every workflow you encode, every edge case your agent learns to handle, every piece of institutional knowledge you make accessible to your models — these compound over time. The organizations that start building seriously now will have harnesses in two years that will be very difficult for late movers to catch.

Where to Start

The shift described in this post is not a future state. It’s happening now, in production, at organizations across every industry. The question is not whether to engage with it — it’s how to engage with it effectively.

A few principles to guide the work:

Audit your integration landscape. Identify which integrations exist primarily to compensate for the absence of a reasoning layer. These are the first candidates for replacement with a model-mediated approach. Not all integrations should be replaced — some are genuinely about data movement at scale — but many exist because no system was smart enough to figure out the mapping on its own.

Design for context, not just capability. Before deploying a model into any workflow, ask: does it have everything it needs to make a good decision? Build the retrieval and context layers first. A capable model with poor context will underperform a modest model with excellent context every time.

Make data accessible. Run a frank assessment of which data assets are clean, documented, and accessible via API — and which are locked in legacy systems, undocumented, or governed by policies that assume only humans will access them. The gaps you find are your AI readiness gaps.

Pick your human-in-the-loop moments deliberately. Not every decision should be automated. But the decisions where human judgment is genuinely necessary should be chosen intentionally, not inherited from system designs made before agents existed.

Treat the harness as a product. The harness that powers your AI operations — the tools, the memory, the guardrails, the orchestration — deserves the same investment and care as any customer-facing product. It will likely become more strategically important than most of them.

The organisations that thrived in the API economy were the ones who understood that software was eating the world — and positioned themselves at the junctions where software connected.

The organisations that will thrive in the model-and-harness economy will be the ones who understand that intelligence is eating software — and that the new junctions are the harnesses that shape what that intelligence can do.

The platform has shifted. The question is whether your organisation is building on the new one.

Leave a comment